Documentation Index

Fetch the complete documentation index at: https://arizeai-433a7140.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Releases · Arize-ai/phoenix

GitHub

04.28.2026

04.28.2026: Session Notes API

Available in arize-phoenix 14.16.0+Phoenix now supports creating session notes throughPOST /v1/session_notes. The generic session annotation endpoint now reserves name="note" for note-specific APIs, so use POST /v1/session_notes for session notes and POST /v1/session_annotations for regular annotations.04.24.2026

04.24.2026: arize-phoenix-otel 0.16

Available in arize-phoenix-otel 0.16.0+arize-phoenix-otel now re-exports the most common OpenInference context managers and semantic conventions, so manual instrumentation no longer requires installing openinference-instrumentation or openinference-semantic-conventions as separate dependencies.- Context managers —

using_session,using_user,using_metadata,using_tags,using_attributes,using_prompt_template,suppress_tracing(usable aswithblocks or decorators) - Semantic conventions —

SpanAttributes,OpenInferenceSpanKindValues,OpenInferenceMimeTypeValues - Single install —

pip install "arize-phoenix-otel>=0.16.0"is enough forregister()+ context propagation

04.22.2026

04.22.2026: Secrets Settings Page

Available in arize-phoenix 14.11.0+A new Settings → Secrets page lets admins add, replace, and delete encrypted LLM provider credentials (e.g.OPENAI_API_KEY, ANTHROPIC_API_KEY) directly in the Phoenix UI — no REST API calls required.- Add / Replace / Delete secrets from the browser

- Search and filter the secrets list by key name or owner

- Admin-only — all mutations require admin access

04.22.2026

04.22.2026: trace_id in Experiment Evaluators

Available in arize-phoenix-client 2.4.0+Evaluator functions now receive the originating trace ID for each experiment run. Add a trace_id keyword argument to any evaluator function or Evaluator class method to access it.- Optional — evaluators without

trace_idare unaffected - Works sync and async — supported on both function-based and protocol-based evaluators

04.20.2026

04.20.2026: Span Attribute Filtering

Available in arize-phoenix 14.9.0+ (server), arize-phoenix-client 2.4.0+ (Python), @arizeai/phoenix-client 6.7.0+ (TypeScript)Filter spans by stored attribute values using the Python client, TypeScript client, REST API, or CLI. Multiple filters are AND-ed together; the value’s JS/Python type selects which storage type is matched.- Python —

client.spans.get_spans(..., attributes={"llm.model_name": "gpt-4o"}) - TypeScript —

getSpans({ ..., attributes: { "llm.model_name": "gpt-4o" } }) - CLI —

px span list --attribute "llm.model_name:gpt-4o" - Type-aware —

int,float,bool, andstrvalues each match their corresponding stored type

04.20.2026

04.20.2026: CLI Span Notes

Available in @arizeai/phoenix-cli 1.1.0+px span add-note <span-id> --text "..." attaches a free-text note to any span. Pass --include-notes to px span list or px trace get to read notes back alongside span data.04.20.2026

04.20.2026: Claude Opus 4.7 in the Playground

Available in arize-phoenix 14.9.0+Claude Opus 4.7 is now available as a model option in the Phoenix Playground. Select it from the model picker to compare outputs against other Anthropic and cross-provider models.04.16.2026

04.16.2026: Azure Managed Identity for PostgreSQL

Available in arize-phoenix 14.8.0+Connect Phoenix to Azure Database for PostgreSQL using Microsoft Entra managed identity — no static database password required. Install theazure extra and set PHOENIX_POSTGRES_USE_AZURE_MANAGED_IDENTITY=true.- Zero-credential setup — tokens are fetched and refreshed automatically on each connection

- New

[azure]extra —pip install 'arize-phoenix[azure]'pulls inazure-identityandaiohttp PHOENIX_POSTGRES_AWS_IAM_TOKEN_LIFETIME_SECONDSdeprecated — silently ignored with a startup warning

04.14.2026

04.14.2026: CLI Annotation Commands

Available in @arizeai/phoenix-cli 1.0.4+px span annotate and px trace annotate write labels, scores, and explanations to spans and traces from the terminal. Pass --include-annotations to px trace get or px span list to read annotations back alongside the data.px span annotate <span-id>— attach a label, score, or explanation to any span by OTel span IDpx trace annotate <trace-id>— annotate a full trace by OTel trace ID- Annotator kinds —

HUMAN,LLM, orCODE; submitting again with the same name updates the existing entry

04.13.2026

04.13.2026: @arizeai/phoenix-otel 1.0

Available in @arizeai/phoenix-otel 1.0.0+@arizeai/phoenix-otel now re-exports the full @arizeai/openinference-core and @arizeai/openinference-semantic-conventions surface from a single import.- Tracing helpers —

withSpan,traceChain,traceAgent,traceToolwrap functions with OpenInference spans and follow global provider changes observedecorator — trace class methods with TypeScript 5+ standard decorators while preservingthis- Context setters —

setSession,setUser,setMetadata,setTags,setAttributes,setPromptTemplatepropagate attributes to child spans - Attribute builders —

getLLMAttributes,getRetrieverAttributes,getEmbeddingAttributes,getToolAttributes, and more for raw OTel spans - Semantic conventions —

SemanticConventionsandOpenInferenceSpanKindre-exported; no second dependency needed - Redaction —

OITracer+traceConfigorOPENINFERENCE_HIDE_*env vars strip sensitive attributes before export

04.10.2026

04.10.2026: Shareable Project URLs

Available in arize-phoenix 14.2.0+Navigate to/redirects/projects/{project_name} and Phoenix resolves the name and redirects to the project page — no internal ID required. Construct stable, bookmarkable links using the project name you already set in PHOENIX_PROJECT_NAME.- Project by name —

/redirects/projects/defaultresolves and redirects to the project page - Other patterns — traces, spans, sessions, and prompt tags are also supported via

/redirects/...

04.07.2026

04.07.2026: PostgreSQL Read Replica Routing

Available in arize-phoenix 14.0.0+Route read-only queries (GraphQL resolvers, REST reads, dataloaders) to an optional PostgreSQL read replica viaPHOENIX_SQL_DATABASE_READ_REPLICA_URL, reducing load on the primary under high span ingestion.- Set and go — point the env var at your replica; writes always go to the primary

- No config change needed when a replica is not configured — falls back to primary

04.07.2026

04.07.2026: Breaking Changes — Phoenix v14

Breaking changes in arize-phoenix 14.0.0, arize-phoenix-evals 3.0.0, arize-phoenix-client 2.3.1+Phoenix v14 removes several legacy APIs. See the migration guide for step-by-step instructions.- CLI is now subcommand-first —

phoenix serve --devreplacesphoenix --dev serve px.Client()removed — usefrom phoenix.client import Clientwithbase_url=instead ofendpoint=/v1/evaluationsremoved — use/v1/span_annotations,/v1/trace_annotations, or/v1/document_annotations- Evals 1.0 removed —

arize-phoenix-evals3.0.0 drops thelegacy/subpackage;phoenix.experimentsis replaced byphoenix.client.experiments - GraphQL pagination requires

first—Project.spans,Trace.spans, andProjectSession.tracesnow require an explicitfirstargument (max 1000) - Resume interrupted SDK experiments — continue missing or failed runs with

resume_experimentandasync_resume_experiment

04.03.2026

04.03.2026: ATIF Trajectory Upload

Available in arize-phoenix-client 2.3.0+ (Python)upload_atif_trajectories_as_spans converts ATIF (Agent Trajectory Interchange Format) trajectory JSON files into OpenTelemetry span trees and uploads them to Phoenix. Visualize offline Harbor agent runs alongside live instrumented traces.- Supports ATIF v1.0–v1.6 including multimodal content (images) in v1.6+

- Subagent linking — upload parent and child trajectories together to link them into one trace

- Idempotent — trace/span IDs are derived from

session_idvia SHA-256; re-uploading the same file is safe

04.01.2026

04.01.2026: Python 3.14 Support

Available in arize-phoenix 13.21.0+, arize-phoenix-client 2.2.0+, arize-phoenix-evals 2.13.0+Phoenix server, Python client SDK, and evals library now support Python 3.14 on Linux and macOS.04.01.2026

04.01.2026: Secrets Management REST API

Available in arize-phoenix 13.21.0+Admin users can store encrypted LLM provider API keys in Phoenix viaPUT /v1/secrets. Atomically upsert or delete multiple secrets in one request; values are AES-encrypted at rest and never returned in responses.PUT /v1/secrets— batch upsert/delete withvalue: nullto remove a key- Admin-only, atomic, silent no-op for deleting non-existent keys

04.01.2026

04.01.2026: get_traces — Retrieve Traces via Python SDK

Available in arize-phoenix 13.15.0+ (server), arize-phoenix-client 2.2.0+ (Python)client.traces.get_traces() fetches traces for a project with time range filtering, session filtering, sort control, and automatic cursor-based pagination.include_spans=Trueto embed full span detail in each tracesession_idto filter to one or more sessions- Async variant available on

AsyncClient

03.30.2026

03.30.2026: Delete Prompts and Prompt Version Tags

Available in arize-phoenix 13.20.0+Two new REST endpoints let you delete prompts and remove tags from prompt versions programmatically.DELETE /v1/prompts/{prompt_identifier}— permanently deletes a prompt and all its versions, tags, and labelsDELETE /v1/prompt_versions/{id}/tags/{tag_name}— removes a single tag from a specific prompt version

03.24.2026

03.24.2026: Prompt Version Diff View

Available in arize-phoenix 13.18.0+The Prompts UI now shows a line-by-line diff between any two prompt versions. See exactly what changed — message content, tool call arguments, and tool results — without leaving Phoenix.- Side-by-side diff across the full chat template, including all content part types

03.24.2026

03.24.2026: Evals Accept Structured Data Inputs

Available in arize-phoenix-evals 2.12.0+Evaluators now accept dicts and lists as template variable values, JSON-serializing them automatically. Built-in evaluators also accept**kwargs forwarded to the LLM on every call (e.g., temperature=0.0).- Structured inputs (dicts, lists) are JSON-serialized before prompt rendering — no manual

json.dumps()needed - LLM invocation kwargs accepted by all built-in evaluators (

FaithfulnessEvaluator,CorrectnessEvaluator, etc.)

03.23.2026

03.23.2026: px auth status Shows Username and Role

Available in arize-phoenix 13.17.0+ and @arizeai/phoenix-cli 0.12.0+px auth status now verifies credentials against the server and displays the authenticated username and role alongside the endpoint and token info.03.22.2026

03.22.2026: px spans — Fetch and Filter Spans from the CLI

Available in arize-phoenix 13.16.0+ and @arizeai/phoenix-cli 0.12.0+px spans fetches spans for a project with filtering by kind, status code, name, trace ID, and time window. Output to the terminal or save to JSON for offline use.--span-kind,--status-code,--name,--trace-idfilters--last-n-minutes/--sincetime range controls--include-annotationsto attach span annotations to the output

03.22.2026

03.22.2026: px self update and GET /v1/user

Available in arize-phoenix 13.16.0+ and @arizeai/phoenix-cli 0.12.0+px self update upgrades the installed CLI to the latest version, detecting npm, pnpm, bun, or Deno automatically. GET /v1/user returns the authenticated user’s profile (username, email, role) or an anonymous representation when auth is disabled.03.18.2026

03.18.2026: Span Filters — Name, Kind, and Status Code

Available in arize-phoenix 13.15.0+ (server), arize-phoenix-client 2.1.0+ (Python), @arizeai/phoenix-client 6.5.1+ (TypeScript)Filter spans directly by name, span kind (LLM, CHAIN, TOOL, etc.), and status code (OK, ERROR, UNSET) in both the REST API and SDK clients. Filters are OR-combined within a field and AND-combined across fields.namefilter to match one or more span namesspan_kindfilter forLLM,CHAIN,TOOL,RETRIEVER, and other span kindsstatus_codefilter to isolate error, success, or unset spans- Available in Python via

client.spans.get_spans(name=..., span_kind=..., status_code=...) - Available in TypeScript via

getSpans({ name, spanKind, statusCode })

03.13.2026

03.13.2026: List Traces by Project

Available in arize-phoenix 13.15.0+A newGET /v1/projects/{project_identifier}/traces REST endpoint lists traces with time filtering, sort order, cursor pagination, optional inline spans, and session filtering.GET /v1/projects/{project}/traceswithsort,order,limit,cursor,include_spans, andsession_identifierparams

03.11.2026

03.11.2026: Session Turns API

Available in arize-phoenix-client 2.0.0+ (Python) and @arizeai/phoenix-client 6.4.0+ (TypeScript)Phoenix now provides a dedicated session turns API that reconstructs the ordered input/output pairs across all traces in a session. The newget_session_turns() method (Python) and getSessionTurns() function (TypeScript) extract root span input.value / output.value attributes and return chronologically ordered SessionTurn objects.- Chronological turn ordering from session traces sorted by start time

SessionTurnIOwith MIME type — supportstext/plain,application/json, and image types- Batched root span fetching with pagination to handle large sessions

- Async variants available in both Python and TypeScript clients

03.10.2026

03.10.2026: Session Management REST APIs

Available in arize-phoenix 13.13.0+ (server), arize-phoenix-client 1.31.0+ (Python), @arizeai/phoenix-client 6.1.0+ (TypeScript)Phoenix now exposes comprehensive session management through REST API endpoints on the server. Retrieve individual sessions, list sessions with pagination and project filtering, and delete sessions with cascading cleanup of associated traces, spans, and annotations.- Single session retrieval by ID or GlobalID with optional project filtering

- Bulk session listing with pagination, project filtering, and sorting

- Session deletion with automatic cascade through traces and spans

- DataFrame export in Python for sessions data analysis

- Configurable timeouts for all session operations

03.09.2026

03.09.2026: Filter Spans by Trace ID and Parent Relationships

Available in arize-phoenix 13.12.0+ (server), arize-phoenix-client 2.0.0+ (Python), @arizeai/phoenix-client 6.3.0+ (TypeScript)Span queries now support filtering by trace ID and parent relationships, enabling precise navigation of trace hierarchies. Query for root spans usingparent_id=null or retrieve all children of a specific parent span to reconstruct execution trees programmatically.- Trace ID filtering to retrieve spans from specific traces

- Parent ID filtering to query root spans or span children

- Multi-trace queries via repeated trace ID parameters

- Composable with existing filters like time ranges and limits

03.04.2026

03.04.2026: Incremental Evaluation Metrics in Playground

Available in arize-phoenix 13.8.0+The Playground now displays evaluation metrics, cost, and latency aggregates in real-time as dataset experiments run. Metrics update incrementally every ~2 seconds, providing immediate feedback on experiment performance without waiting for completion.03.04.2026

03.04.2026: Brute Force Login Protection

Available in arize-phoenix 13.8.0+Phoenix now automatically protects login endpoints against brute force attacks. After 5 consecutive failed attempts, the account is temporarily locked for 5 minutes. Enabled by default with configurable thresholds viaPHOENIX_BRUTE_FORCE_LOGIN_PROTECTION_MAX_ATTEMPTS.03.07.2026

03.07.2026: Unified Dataset Upload with Drag-and-Drop

Available in arize-phoenix 13.9.0+Dataset creation from files is now streamlined with automatic file type detection and a unified upload experience. Drag-and-drop CSV or JSONL files anywhere in the upload form, and Phoenix automatically parses headers and previews data without loading entire files into memory.- Automatic format detection for CSV and JSONL files

- Drag-and-drop file selection with visual feedback

- Streaming parser that handles large files efficiently

- RFC 4180 CSV support including quoted fields, escaped quotes, and BOM handling

- Detailed error messages for parsing issues with line-by-line feedback

03.10.2026

03.10.2026: Drag-and-Drop Column Assignment for Datasets

Available in arize-phoenix 13.13.0+Dataset creation now features an intuitive drag-and-drop column assignment interface. Assign columns to input, output, or metadata buckets with automatic suggestions based on common naming conventions, and preview exactly how your data will appear in the final dataset.- Visual column assignment with draggable chips and drop targets

- Smart auto-assignment based on column names like “input”, “output”, “reference”

- Live dataset preview showing the final structure as you make changes

- Keyboard navigation support for accessibility

- Raw data preview in tabular format alongside final dataset view

03.08.2026

03.08.2026: Extended Model Provider Support

Available in arize-phoenix 13.10.0+ (Cerebras, Fireworks, Groq, Moonshot) and arize-phoenix 13.11.0+ (Perplexity, Together AI)Phoenix Playground now supports six additional OpenAI-compatible model providers: Perplexity AI, Together AI, Cerebras, Fireworks AI, Groq, and Moonshot (Kimi). Access hundreds of new models including specialized reasoning models and fine-tuned variants through familiar OpenAI-compatible APIs.- Perplexity AI for research and web-grounded responses

- Together AI with models from Moonshot, DeepSeek, Qwen, and GLM

- Cerebras for ultra-fast inference with Llama models

- Fireworks AI with Llama 4 Scout and Maverick variants

- Groq for low-latency Llama and Qwen deployments

- Moonshot (Kimi) with extended 128k and 32k context models

- Cost tracking enabled for Cerebras, Fireworks, Groq, and Moonshot

03.10.2026

03.10.2026: Provider Visibility Controls

Available in arize-phoenix 13.13.0+Control which model providers appear in the Phoenix UI using thePHOENIX_ALLOWED_PROVIDERS environment variable. Set it to a comma-separated list of provider names to show only those providers, keeping your interface focused on the tools you actually use.- Allow-list mode to show only specified providers

- Case-insensitive configuration with typo detection warnings

- Set to NONE to hide all providers from the UI

03.08.2026

03.08.2026: Latest OpenAI GPT Models

Available in arize-phoenix 13.10.0+Phoenix Playground now includes the latest OpenAI models: GPT-5.4 family, GPT-5.3-chat-latest, GPT-5.2-pro variants, and o3-pro-2025-06-10. All models include cost tracking and are ready to use in experiments and prompt testing.03.08.2026

03.08.2026: Project Editing from Settings

Available in arize-phoenix 13.10.0+Edit project descriptions and customize gradient colors directly from the Project Settings page. Click the edit button to update project metadata inline, with changes persisting immediately across the Phoenix UI.03.11.2026

03.11.2026: Breaking Change: Removed Deprecated Annotations API

Breaking change in arize-phoenix-client 2.0.0The deprecatedclient.annotations module has been removed. All annotation methods remain available on client.spans. Update your code to use client.spans.add_span_annotation() and client.spans.log_span_annotations() instead of the client.annotations variants.03.05.2026

03.05.2026: SDK Session Retrieval

Session retrieval is now available in the Python client (client.sessions.get(), client.sessions.list(), get_sessions_dataframe()) and the TypeScript client (getSession(), listSessions()). Both SDKs support automatic pagination and async usage for working with session turns data.02.27.2026

02.27.2026: Sessions API and CLI Support

REST API endpoints for listing and getting sessions (GET /v1/sessions, GET /v1/sessions/{id}) and CLI commands (px sessions, px session <id>) for exploring multi-turn conversations from the terminal.02.24.2026

02.24.2026: Claude Agent SDK Integration

Phoenix now supports tracing for Anthropic’s Claude Agent SDK via a new OpenInference instrumentation package. The integration automatically captures AGENT and TOOL spans, giving you full visibility into your Claude Agent SDK applications.- Install

@arizeai/openinference-instrumentation-claude-agent-sdkalongside@arizeai/phoenix-otel - See agent execution flows and tool invocations in Phoenix’s trace UI

- Get started →

02.14.2026

02.14.2026: Phoenix 13.0

Phoenix 13.0 is a major release centered on Dataset Evaluators, with support for custom model providers, OpenAI Responses API type selection, and extensive Playground and dataset/experiment UX improvements.Highlights include:- Attach evaluator suites directly to datasets and run them server-side on every Playground experiment.

- Reuse server-managed custom providers (OpenAI, Azure OpenAI, Anthropic, AWS Bedrock, Google GenAI) across Playground, prompts, and dataset evaluators.

- Choose OpenAI API type per configuration (

chat.completions.createorresponses.create) with automatic parameter compatibility handling. - Use new Playground workflows such as cancellation, template variable autocomplete, appended messages, improved prompt selection, and URL state for prompt IDs/versions/tags.

- Get expanded dataset and experiment ergonomics, model/provider updates (including Claude Opus 4.6 and Azure OpenAI v1 migration), and infrastructure improvements like a session ID index for spans.

02.12.2026

02.12.2026: OpenAI Responses API Type Support

Phoenix now supports selecting the OpenAI API type for OpenAI and Azure OpenAI calls in the Playground and custom providers. Choose Chat Completions (chat.completions.create) or Responses (responses.create) depending on the model and features you want to use.Key capabilities:- API type selection: Choose Chat Completions or Responses per model configuration.

- Custom provider support: OpenAI and Azure OpenAI custom providers can be configured with an API type for consistent routing.

- Parameter compatibility: Phoenix maps shared invocation parameters to the chosen API type and filters unsupported fields automatically.

02.12.2026

02.12.2026: Dataset Evaluators

Requires Phoenix 13.x. Dataset evaluators let you attach evaluators directly to a dataset so they automatically run server-side whenever you execute experiments from the Phoenix UI (for example, from the Playground). This turns your dataset into a reusable evaluation suite and removes the need to reconfigure evaluators for every experiment.Key capabilities:- Attach once, evaluate everywhere: Add LLM or built-in code evaluators to a dataset and reuse them across Playground experiments.

- Flexible input mapping: Map evaluator inputs to dataset fields so each example is evaluated consistently.

- Built-in visibility: Each evaluator captures traces for debugging and refinement, with details available from the evaluator view.

02.11.2026

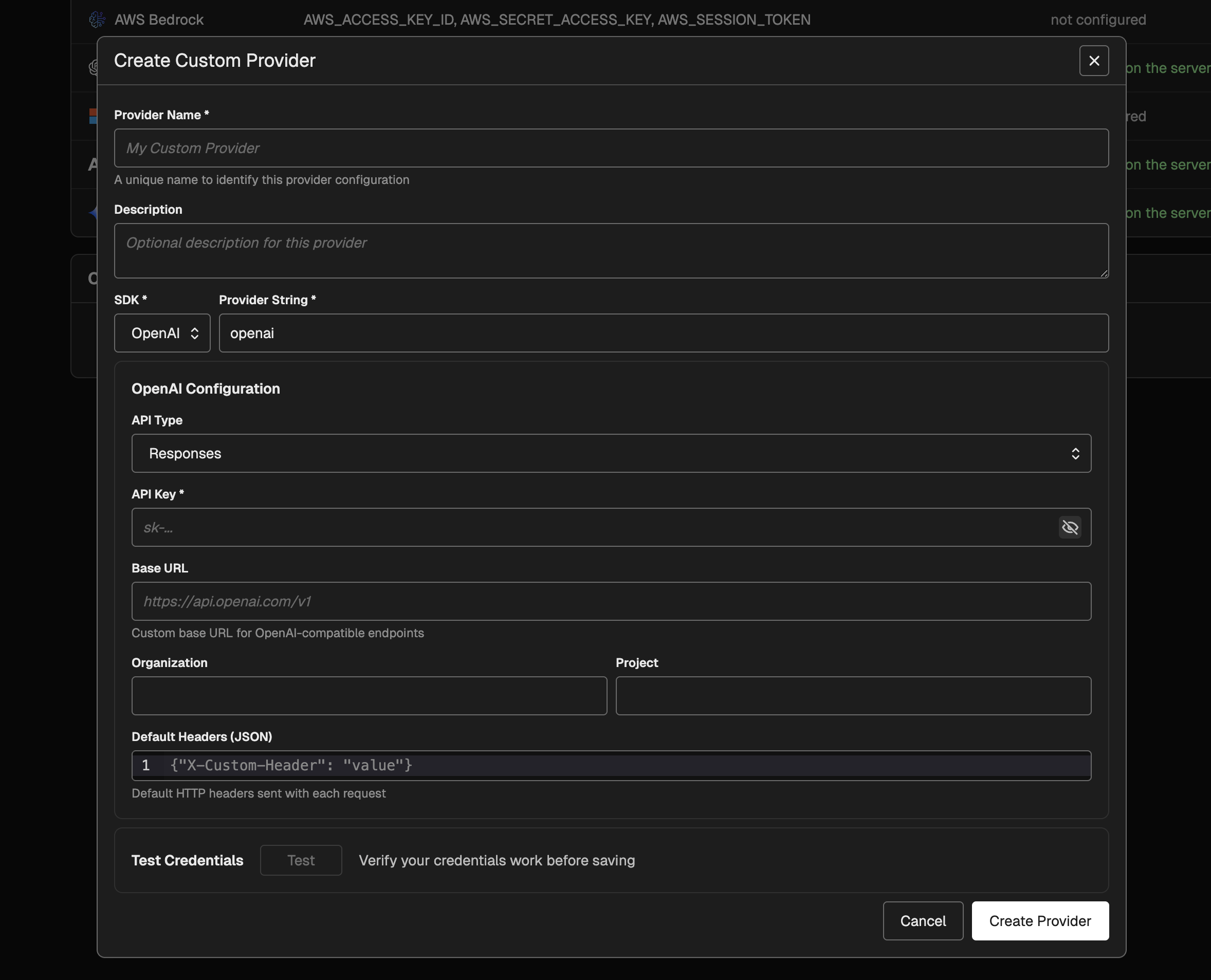

02.11.2026: Custom Providers for Playground and Prompts

Phoenix now supports custom providers for OpenAI, Azure OpenAI, Anthropic, AWS Bedrock, and Google GenAI. Custom providers let you store provider credentials and routing configuration on the server and reuse them across the playground and saved prompt versions. Key capabilities:

Key capabilities:- Centralized configuration: Manage provider credentials and routing in Settings and reuse them across the playground and prompt versions.

- SDK-specific authentication: Support API keys, Azure AD token providers, or default credentials (Azure/AWS) depending on the SDK.

- Model selection integration: Custom providers show up in model menus as their own provider group and inherit model listings from the underlying SDK.

- Request-level overrides: Continue to supply custom request headers per prompt while using custom provider configuration for routing and authentication.

02.09.2026

02.09.2026: Claude Opus 4.6 Model Support

Phoenix playground now supports Claude Opus 4.6, Anthropic’s latest flagship model. Selectclaude-opus-4-6 in the Anthropic provider or anthropic.claude-opus-4-6-v1 in AWS Bedrock to start using the model with full extended thinking parameter support and accurate cost tracking.Key capabilities:- Anthropic provider integration: Access Claude Opus 4.6 directly through the playground with the

thinkinginvocation parameter enabled for extended reasoning workflows - AWS Bedrock support: Deploy Opus 4.6 through Bedrock with the region-specific model identifier

- Automatic cost tracking: Token costs are calculated using the latest pricing (25 per million output tokens, plus cache read/write rates)

01.31.2026

01.31.2026: Tool Selection and Tool Invocation Evaluators

Available in arize-phoenix-evals 0.16.0+ (Python) and @arizeai/phoenix-evals 1.3.0+ (TypeScript)Phoenix now provides two specialized evaluators for assessing AI agent tool usage. The Tool Selection Evaluator judges whether an agent correctly chose the most appropriate tool from its available toolkit to answer a user’s question, without evaluating the parameters passed. The Tool Invocation Evaluator assesses whether the agent correctly invoked a tool with proper parameters, JSON formatting, and safe values.These evaluators help developers:- Identify tool selection errors where agents choose suboptimal or incorrect tools

- Debug parameter issues including hallucinated fields, malformed JSON, and incorrect values

- Improve tool descriptions and agent prompts based on systematic evaluation

- Validate multi-tool and multi-turn interactions across complex agent workflows

ToolSelectionEvaluator and ToolInvocationEvaluator in Python’s phoenix.evals.metrics module, and as createToolSelectionEvaluator and createToolInvocationEvaluator in TypeScript.01.28.2026

01.28.2026: Configurable Email Extraction for OAuth2 Providers

Available in Phoenix 12.33.1+Phoenix now supports custom email extraction from OAuth2 identity providers through thePHOENIX_OAUTH2_{IDP}_EMAIL_ATTRIBUTE_PATH environment variable. This solves authentication issues with providers like Azure AD/Entra ID where the standard email claim may be null but alternative claims like preferred_username contain the user’s identity.Configure email extraction using JMESPath expressions:email claim when no custom path is specified. JMESPath expressions are validated at startup for immediate feedback on configuration errors.01.22.2026

01.22.2026: CLI Commands for Prompts, Datasets, and Experiments

Available in @arizeai/phoenix-cli 0.4.0+The Phoenix CLI now provides comprehensive commands for managing prompts, datasets, and experiments directly from your terminal. Access version-controlled prompts, create evaluation datasets, and run experiments—all without leaving your development environment.Prompt Management:- List and view prompts with

px promptsandpx prompt <name> - Pipe prompts to AI assistants for optimization and analysis

- Text format output with XML-style role tags for LLM consumption

- Create and manage datasets with

px datasetsandpx dataset <name> - Add examples and query dataset contents

- Export datasets for offline analysis

- Run experiments and compare results across configurations

- View experiment details and performance metrics

- Track changes across prompt and model variations

01.23.2026

01.23.2026: CLI Authentication Configuration

Available in @arizeai/phoenix-cli 0.4.0+The Phoenix CLI now includes enhanced authentication configuration commands, resolving database race conditions and improving connection reliability. Users can configure authentication settings directly through the CLI for more predictable and stable connections to Phoenix servers.01.21.2026

01.21.2026: Create Datasets from Traces with Span Associations

Available in arize-phoenix-client 1.28.0+ (Python) and @arizeai/phoenix-client 2.0.0+ (TypeScript)Phoenix now enables converting production traces into curated datasets while preserving bidirectional links back to source spans. Use the newspan_id_key parameter to maintain traceability from evaluation examples to their original production executions.Python Example:- Batch resolution of span IDs for optimal performance

- Graceful fallback when span IDs are missing or invalid

- Backwards compatible with existing dataset creation workflows

- Bidirectional navigation between evaluation results and production traces

01.19.2026

01.19.2026: Export Annotations with Traces

Available in @arizeai/phoenix-cli 0.3.0+The Phoenix CLI now supports exporting annotations alongside traces using the--include-annotations flag. Annotations—including manual labels, LLM evaluation scores, and programmatic feedback—are now preserved when exporting traces for offline analysis, backup, or migration workflows.01.22.2026

01.22.2026: CLI Prompt Commands: Pipe Prompts to AI Assistants 📝

Available in @arizeai/phoenix-cli 0.4.0+Phoenix CLI now supports prompt introspection withpx prompts and px prompt. List prompts, view their content, and pipe them directly to AI assistants like Claude Code for optimization suggestions. The --format text option outputs prompts with XML-style role tags, ideal for analysis workflows.01.21.2026

01.21.2026: Create Datasets from Traces with Span Associations 🔗

Available in arize-phoenix-client 1.28.0+ (Python) and @arizeai/phoenix-client 2.0.0+ (TypeScript)The Phoenix client now enables converting production traces into curated datasets while preserving associations back to source spans. Query spans using client methods, then create datasets with span associations to maintain bidirectional links. Use this to build golden datasets from validated interactions, curate edge cases from failed traces, or create regression test suites from critical user flows.01.21.2026

01.21.2026: Phoenix CLI: Datasets, Experiments & Annotations 🧪

Available in @arizeai/phoenix-cli 0.2.0+The Phoenix CLI now supports datasets, experiments, and annotations. Pull evaluation data, export experiment results, and access human feedback directly from the terminal. Works well with AI coding assistants for analyzing test cases and reviewing results.01.17.2026

01.17.2026: Phoenix CLI: Terminal Access for AI Coding Assistants 🖥️

Available in @arizeai/phoenix-cli 0.1.0+AI coding assistants operate through terminals and files—they run shell commands, read output, and process data. The new Phoenix CLI makes trace data accessible through these interfaces, enabling tools like Claude Code, Cursor, and Windsurf to query your Phoenix instance directly. Export traces to JSON, pipe tojq, or save to disk for analysis.01.05.2026

01.05.2026: Appended Messages for Playground Experiments 💬

Available in Phoenix 13.0+The Prompt Playground now supports appending conversation history from dataset examples to your prompts. This enables powerful A/B testing workflows for comparing models and system prompts against the same conversation threads. Specify a dot-notation path to messages in your dataset (e.g.,messages or input.messages) and run experiments across all prompt variants.12.20.2025

12.20.2025: Improved User Preferences ⚙️

Available in Phoenix 12.27+Phoenix now offers enhanced user preference settings, giving you more control over your experience. This update includes theme selection in viewer preferences and programming language preference.12.12.2025

12.12.2025: Support for Gemini Tool Calls 🤖

Available in Phoenix 12.25+Phoenix now supports Gemini tool calls, enabling enhanced integration capabilities with Google’s Gemini models. This update allows for more robust and feature-complete interactions with Gemini, including improved request/response translation and advanced conversation handling with tool calls.12.09.2025

12.09.2025: Span Notes API 📝

Available in Phoenix 12.21+New dedicated endpoints for span notes enable open coding and seamless annotation integrations. Add notes to spans programmatically using the Phoenix client in both Python and TypeScript—perfect for debugging sessions, human feedback, and building custom annotation pipelines.12.06.2025

12.06.2025: LDAP Authentication Support 🔐

Available in Phoenix 12.20+Phoenix now supports authentication against LDAP directories, enabling integration with enterprise identity infrastructure including Microsoft Active Directory, OpenLDAP, and any LDAP v3 compliant directory. Key features include group-based role mapping, multi-server failover, TLS encryption, and automatic user provisioning.12.04.2025

12.04.2025: Evaluator Message Formats 💬

Available in phoenix-evals 0.22+ (Python) and @arizeai/phoenix-evals 2.0+ (TypeScript)Phoenix evaluators now support flexible prompt formats including simple string templates and OpenAI-style message arrays for multi-turn prompts. Python supports both f-string and mustache syntax, while TypeScript uses mustache syntax. Adapters handle provider-specific transformations automatically.12.03.2025

12.03.2025: TypeScript createEvaluator 🧪

Available in @arizeai/phoenix-evals 2.0+ThecreateEvaluator utility provides a type-safe way to build custom code evaluators for experiments in TypeScript. Define evaluators with full type inference, access input, output, expected, and metadata parameters, and integrate seamlessly with runExperiment.12.01.2025

12.01.2025: Splits on Experiments Table 📊

Available in Phoenix 12.20+You can now view and filter experiment results by data splits directly in the experiments table. This enhancement makes it easier to analyze performance across different data subsets (such as train, validation, and test) and compare how your models perform on each split.11.29.2025

11.29.2025: Add support for Claude Opus 4-5 🤖

Available in Phoenix 12.18+

11.27.2025

11.27.2025: Show Server Credential Setup in Playground API Keys 🔐

Available in Phoenix 12.18+

11.25.2025

11.25.2025: Split Assignments When Uploading a Dataset 🗂️

Available in Phoenix 12.18+11.23.2025

11.23.2025: Repetitions for Manual Playground Invocations 🛝

Available in Phoenix 12.17+

11.14.2025

11.14.2025: Expanded Provider Support with OpenAI 5.1 + Gemini 3 🔧

Available in Phoenix 12.15+This update enhances LLM provider support by adding OpenAI v5.1 compatibility (including reasoning capabilities), expanding support for Google DeepMind/Gemini models, and introducing the gemini-3 model variant.11.12.2025

11.12.2025: Updated Anthropic Model List 🧠

Available in Phoenix 12.15+This update enhances the Anthropic model registrations in Arize Phoenix by adding support for the 4.5 Sonnet/Haiku variants and removing several legacy 3.x Sonnet/Opus entries.11.09.2025

11.09.2025: OpenInference TypeScript 2.0 💻

- Added easy manual instrumentation with the same decorators, wrappers, and attribute helpers found in the Python

openinference-instrumentationpackage. - Introduced function tracing utilities that automatically create spans for sync/async function execution, including specialized wrappers for chains, agents, and tools.

- Added decorator-based method tracing, enabling automatic span creation on class methods via the

@observedecorator. - Expanded attribute helper utilities for standardized OpenTelemetry metadata creation, including helpers for inputs/outputs, LLM operations, embeddings, retrievers, and tool definitions.

- Overall, tracing workflows, agent behavior, and external tool calls is now significantly simpler and more consistent across languages.

11.07.2025

11.07.2025: Timezone Preference 🌍

Available in Phoenix 12.11+

11.05.2025

11.05.2025: Metadata for Prompts 🗂️

Available in Phoenix 12.10+

metadata field for prompts.11.03.2025

11.03.2025: Playground Dataset Label Display 🏷️

Available in Phoenix 12.10+

11.01.2025

11.01.2025: Resume Experiments and Evaluations 🔄

Available in Phoenix 12.10+This release allows you to resume your experiments and evaluations at your convenience. If certain examples fail, there is no need to repeat an entire task you already completed. This feature provides you with new management capabilities across servers and clients. It’s designed to save effort, making your experimentation workflow more flexible.10.30.2025

10.30.2025: Metadata Support for Experiment Run Annotations 🧩

Available in Phoenix 12.9+

10.28.2025

10.28.2025: Enable AWS IAM Auth for DB Configuration 🔐

Available in Phoenix 12.9+Added support for AWS IAM–based authentication for PostgreSQL connections to AWS Aurora and RDS. This enhancement enables the use of short-lived IAM tokens instead of static passwords, improving security and compliance for database access.10.26.2025

10.26.2025: Add Split Edit Menu to Examples ䷖

Available in Phoenix 12.8+

10.24.2025

10.24.2025: Filter Prompts Page by Label 🏷️

Available in Phoenix 12.7+

10.20.2025

10.20.2025: Splits ䷖

Available in Phoenix 12.7+In Arize Phoenix, splits let you categorize your dataset into distinct subsets—such as train, validation, or test—enabling structured workflows for experiments and evaluations. This capability offers more flexibility in how you organize, filter, and compare your data across different stages or experimental conditions.10.18.2025

10.18.2025: Filter Annotations in Compare Experiments Slideover ✍️

Available in Phoenix 12.7+

10.15.2025

10.15.2025: Enhanced Filtering for Examples Table 🔍

Available in Phoenix 12.5+

10.13.2025

10.13.2025: View Traces in Compare Experiments 🧪

Available in Phoenix 12.5+

10.10.2025

10.10.2025: Viewer Role 👀

Available in Phoenix 12.5+Introduced a new VIEWER role with enforced read-only permissions across both GraphQL and REST APIs, improving access control and security.10.08.2025

10.08.2025: Dataset Labels 🏷️

Available in Phoenix 12.3+

10.06.2025

10.06.2025: Paginate Compare Experiments 📃

Available in Phoenix 12.3+

10.05.2025

10.05.2025: Load Prompt by Tag into Playground 🛝

Available in Phoenix 12.2+

10.03.2025

10.03.2025: Prompt Version Editing in Playground 🛝

Available in Phoenix 12.2+

09.29.2025

09.29.2025: Day 0 support for Claude Sonnet 4.5 ⚡

Available in Phoenix 12.1+09.27.2025

09.27.2025: Dataset Splits 📊

Available in Phoenix 12.0+Add support for custom dataset splits to organize examples by category.09.26.2025

09.26.2025: Session Annotations 🗂️

Available in Phoenix 12.0+

09.25.2025

09.25.2025: Repetitions 🔁

Available in Phoenix 11.38+09.24.2025

09.24.2025: Custom HTTP headers for requests in Playground 🛠️

Available in Phoenix 11.36+

09.23.2025

09.23.2025: Repetitions in experiment compare slideover 🔄

Available in Phoenix 11.36+09.22.2025

09.22.2025: Helm configurable image registry & IPv6 support 🌐

Available in Phoenix 11.35+09.17.2025

09.17.2025: Experiment compare details slideover in list view 🔍

Available in Phoenix 11.34+09.15.2025

09.15.2025: Prompt Labels 🏷️

Available in Phoenix 11.33+09.12.2025

09.12.2025: Enable Paging in Experiment Compare Details 📄

Available in Phoenix 11.33+J / K). PaginationSee more

2026